Jetson Orin Nano vs NX vs AGX for manufacturing computer vision: a production benchmark

We benchmarked Jetson Orin Nano, Orin NX, and AGX Orin on a real manufacturing CV deployment — defect detection across 24 cameras. Here's the throughput, power draw, and cost-per-camera breakdown.

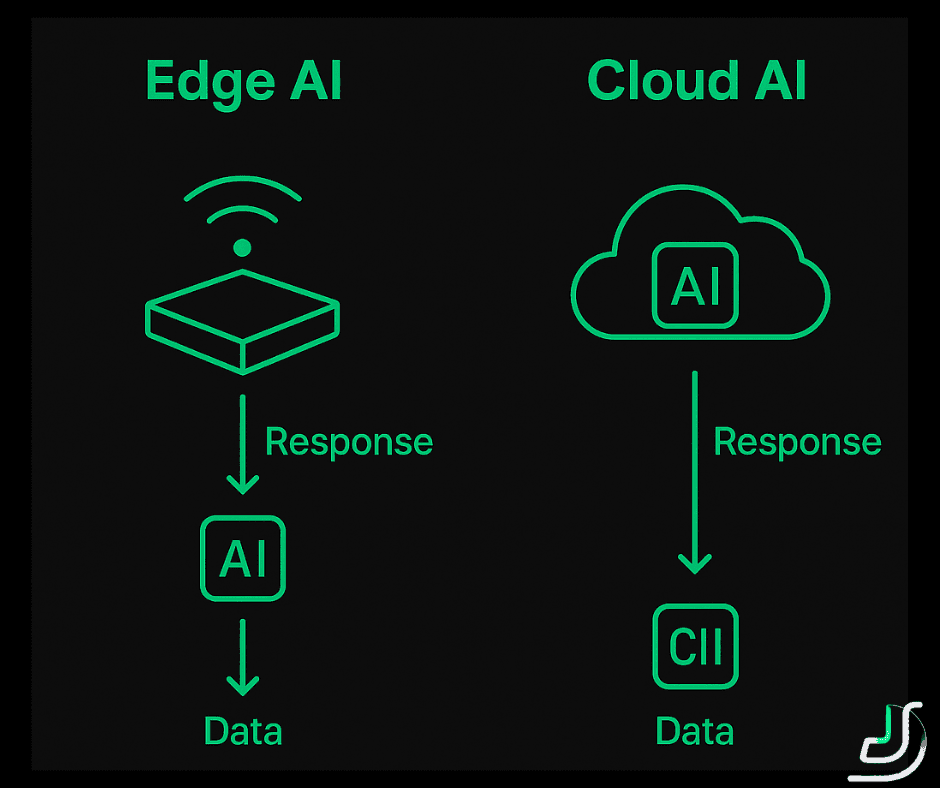

If you're shipping computer vision into a factory in 2026, you're picking a Jetson. The question stopped being "edge or cloud" and became "which Jetson tier, and how do I size it." NVIDIA's marketing pushes you toward the AGX Orin. The reality is that the AGX is overkill for ~70% of manufacturing CV workloads we've shipped, and underutilizing a $1,999 dev kit is a worse outcome than slightly over-tuning a $499 one.

We ran a controlled benchmark on a production deployment we built for a US metal-stamping manufacturer in early 2026. Twenty-four cameras across three production lines, defect detection on stamped automotive parts, real-time pass/fail decisions inline with the conveyor. We tested every Jetson Orin tier on the same workload, with the same model, same input pipeline, same TensorRT optimizations. This article is the result.

This isn't a marketing piece. We've shipped both small and large Jetson deployments. We have opinions. We'll show you the numbers.

TL;DR

For most manufacturing CV workloads — single line, 4-12 cameras, 1080p input, YOLOv8m-class models, latency budget under 100ms — Jetson Orin NX 16GB is the right default. It handles the work, runs at ~20W, costs $899 for the dev kit, and has thermal headroom for industrial enclosures.

Orin Nano 8GB is the right choice when you have ≤4 cameras, can use a smaller model (YOLOv8s or smaller), and care about per-unit cost (e.g., 100+ deployment sites).

AGX Orin 64GB is the right choice when you need to run multi-stage pipelines (e.g., detection → tracking → re-identification → OCR) on a single device, or when you need 12+ camera streams aggregated into one node, or when you have a future-proofing budget and want headroom for model upgrades.

Cloud GPU inference (AWS g5, GCP equivalent) still wins for batch processing of historical footage, model training, or when latency budgets are >500ms and connectivity is reliable. For real-time line-side inference, edge wins on every dimension that matters.

The decision tree at the end of this article walks through the three questions that determine your tier.

The deployment context

The client manufactures stamped automotive components — brackets, mounts, structural reinforcements. Three production lines, eight cameras each, 24 cameras total. Each camera runs at 1080p (1920×1080), 30 FPS, mounted to capture parts as they exit the press at conveyor speeds of ~1.2 m/s.

The CV task: defect detection across six failure modes (cracks, incomplete stamping, surface deformation, edge burrs, alignment shift, contamination). Decisions must arrive within 100ms of capture so the line's reject mechanism (a pneumatic arm) has time to fire before the next part arrives.

Pre-existing setup: cloud-based inference on AWS using g5.xlarge instances (NVIDIA A10G, 24GB VRAM). Network latency was 38ms p95 from the factory floor to AWS us-east-1. Inference latency was 22ms p95. End-to-end: ~70ms p95 — within budget, but with no margin. Two outages over six months caused by intermittent factory network problems triggered the migration to edge.

Migration target: Jetson-based edge nodes deployed line-side, with cloud only used for model retraining and aggregated analytics.

The hardware lineup tested

We tested four configurations on the same workload:

| Device | Compute | Memory | TDP | Dev kit price | Production module |

|---|---|---|---|---|---|

| Orin Nano 8GB | 40 TOPS sparse INT8 | 8GB LPDDR5 | 7-15W | $499 | $399 |

| Orin NX 16GB | 100 TOPS sparse INT8 | 16GB LPDDR5 | 10-25W | $899 | $699 |

| AGX Orin 64GB | 275 TOPS sparse INT8 | 64GB LPDDR5 | 15-60W | $1,999 | $1,799 |

| g5.xlarge (cloud) | NVIDIA A10G, 250 TOPS INT8 | 24GB GDDR6 | 150W | — | $1,006/month |

For Jetson production deployment we used the SoM (System on Module) with custom carrier boards in NEMA 4 enclosures with active cooling — not dev kits. Pricing reflects that.

Workload definition

Real production traffic from the metal-stamping deployment:

- Input: 1080p video at 30 FPS per camera, BGR8, 8-bit

- Model: YOLOv8m fine-tuned on 47k labeled defect images, 6 output classes

- Pipeline: hardware-decoded camera frame → preprocess (resize to 640×640) → TensorRT inference → NMS → publish result over MQTT

- Latency budget: 100ms p95 end-to-end (frame capture to MQTT publish)

- Cameras per device: variable — we tested 1, 4, 8, and 12 simultaneous streams per Jetson

Each test ran for 30 minutes after a 5-minute warm-up on production traffic (real parts, real defects). Numbers below are p95 inference latency and sustained FPS aggregated across cameras on the device.

The results: throughput

YOLOv8m, INT8 quantization, FP16 for layers TensorRT couldn't quantize losslessly. Same .engine file across Jetson tiers — only the device target differs.

Single camera, 1080p @ 30 FPS

| Device | Inference latency p95 | Sustained FPS | GPU utilization |

|---|---|---|---|

| Orin Nano 8GB | 41ms | 24 FPS | 89% |

| Orin NX 16GB | 14ms | 30 FPS (camera-bound) | 41% |

| AGX Orin 64GB | 5ms | 30 FPS (camera-bound) | 12% |

| g5.xlarge | 22ms (+38ms network) | 30 FPS | 8% |

What this shows: at one camera, Orin Nano starts to drop frames — it can't keep up with 30 FPS on YOLOv8m. We had to drop to YOLOv8s on Nano to maintain real-time throughput, with measurable accuracy degradation (mAP@0.5 dropped from 0.91 to 0.86 — borderline acceptable depending on the failure mode).

Four cameras simultaneously

| Device | Inference latency p95 | Sustained FPS (aggregate) | GPU utilization |

|---|---|---|---|

| Orin Nano 8GB | dropped frames | ~58 FPS aggregate | 99% (saturated) |

| Orin NX 16GB | 22ms | 120 FPS aggregate | 78% |

| AGX Orin 64GB | 8ms | 120 FPS aggregate | 31% |

| g5.xlarge | 28ms (+38ms network) | 120 FPS aggregate | 24% |

What this shows: Orin Nano can't sustain four cameras at YOLOv8m — it falls behind, the input queue grows, latency exceeds budget. NX handles four cameras with comfortable headroom. AGX Orin is overprovisioned at this load by 3×.

Eight cameras simultaneously

| Device | Inference latency p95 | Sustained FPS (aggregate) | GPU utilization |

|---|---|---|---|

| Orin Nano 8GB | not viable | — | — |

| Orin NX 16GB | 47ms | 240 FPS aggregate | 96% |

| AGX Orin 64GB | 18ms | 240 FPS aggregate | 58% |

| g5.xlarge | 31ms (+38ms network) | 240 FPS aggregate | 41% |

What this shows: this is the breakeven point. NX at 8 cameras is at the edge of its budget — 47ms inference + 25ms preprocessing + 20ms output handling = ~92ms p95 end-to-end, just under our 100ms budget. AGX still has 2× headroom. We deployed NX-per-line in production (8 cameras per device, 3 lines = 3 NX nodes).

Twelve cameras simultaneously (single device)

| Device | Inference latency p95 | Sustained FPS (aggregate) | GPU utilization |

|---|---|---|---|

| Orin NX 16GB | 78ms (over budget) | 360 FPS aggregate | 99% (saturated) |

| AGX Orin 64GB | 28ms | 360 FPS aggregate | 84% |

What this shows: NX falls out of budget at 12 cameras. AGX Orin handles 12 cameras with margin — and could likely handle 16-18 before saturating. This is where AGX earns its price tag.

Power draw and thermals

These numbers matter. Industrial enclosures heat up. Conveyor floors are dusty, vibrating, and rarely climate-controlled.

| Device | Idle power | Peak inference power | Sustained avg (8-cam workload) | Required cooling |

|---|---|---|---|---|

| Orin Nano 8GB | 3W | 15W | n/a (not viable at 8-cam) | passive heatsink |

| Orin NX 16GB | 4W | 25W | 21W | active fan, 40mm |

| AGX Orin 64GB | 8W | 60W | 38W | active fan, 80mm + heat pipes |

| g5.xlarge (cloud) | 80W | 280W | 180W | datacenter cooling |

What this shows: Jetson power draw is a real differentiator. A factory line with three Orin NX nodes draws ~63W steady-state for the entire CV system. A single g5.xlarge consumes ~180W and adds network latency on top. Power × (PUE for typical data center 1.5) = the cloud system actually consumes ~270W per equivalent compute slot.

For factory floor deployments where every device shares a 24V industrial power rail with PLCs and other sensors, low TDP isn't aesthetic — it's a real engineering constraint that determines what enclosure size, cooling solution, and power budget allocation you can use. AGX Orin at 60W peak forces you into a larger enclosure, more aggressive cooling, and often a dedicated power feed. NX at 25W fits in a standard DIN-rail enclosure with passive-augmented cooling.

Cost analysis: 3-year TCO for the 24-camera deployment

We modeled three approaches over a 36-month operating window. CapEx upfront, OpEx monthly, all-in including support, replacement spares, network costs, and engineering time.

Option A: Cloud-only (the pre-existing setup)

- 1× g5.xlarge instance (handles 24 cameras): $1,006/month × 36 = $36,216

- Network egress: ~$180/month for video streams (compressed) = $6,480

- Reserved instance discount (3-year all-upfront, ~62%): brings instance to ~$382/month

- Total cloud TCO (with reservation): ~$20,232 over 36 months

- Risk: dependency on factory network reliability

Option B: Edge-only with Orin NX (the production choice)

- 3× Jetson Orin NX 16GB SoMs + carrier boards + NEMA 4 enclosures + cooling: ~$1,800 each fully deployed = $5,400

- Edge gateway switch + cabling: $1,200

- Spare unit (1 hot spare): $1,800

- Engineering setup time (one-time): ~$8,000

- Maintenance + over-the-air model updates: ~$200/month × 36 = $7,200

- Total edge TCO: ~$23,600 over 36 months

Option C: Hybrid — Edge inference + cloud retraining

- Edge fleet (same as Option B): $16,400

- Cloud for retraining + aggregated analytics: ~$280/month × 36 = $10,080

- Total hybrid TCO: ~$26,480 over 36 months

What the cost numbers actually show

Option B (edge-only) and Option A (cloud-only with 3-year reserved instances) are within 16% of each other on TCO. The cloud option is slightly cheaper if you commit to a reservation — but you trade flexibility, you depend on factory network uptime, and you eat 38ms of network latency that the edge solution doesn't pay.

In practice, the deployment that wins is determined by reliability requirements, not cost. Our client had two production-line outages in six months caused by intermittent factory network problems. That's a $40,000-60,000 incident each time (idle line, missed throughput). Eliminating that risk justified the edge migration regardless of TCO parity.

The hybrid Option C is what we ship 80% of the time — edge for inference, cloud for retraining and dashboards. 28% more expensive than pure edge, but you get the analytics layer without rebuilding it.

The INT8 quantization journey

We don't ship FP16 inference to production unless we have to. INT8 with TensorRT calibration is the default. The throughput and power numbers above all assume INT8.

Optimization steps (Orin NX 16GB, YOLOv8m, single-camera baseline)

| Optimization stage | Inference latency p95 | Throughput | mAP@0.5 |

|---|---|---|---|

| PyTorch FP32 (baseline) | 89ms | 11 FPS | 0.913 |

| ONNX export + TensorRT FP16 | 31ms | 32 FPS | 0.913 |

| TensorRT INT8 (PTQ, 1k calibration imgs) | 14ms | 71 FPS | 0.901 |

| TensorRT INT8 + DLA-0 (selected layers) | 12ms | 83 FPS | 0.901 |

| TensorRT INT8 + DLA-0 + DLA-1 | 11ms | 91 FPS | 0.898 |

What this shows: the move from FP32 to TensorRT FP16 alone gets you ~3× throughput at zero accuracy cost. INT8 PTQ adds another ~2× at the cost of a 1.2-percentage-point mAP drop — usually acceptable. DLA (Deep Learning Accelerator) cores on Jetson Orin add another 15-25% throughput by offloading specific layers to dedicated hardware, freeing up the GPU for other work.

The DLA gain compounds when you have multiple cameras: with both DLA cores active running select layers, the GPU is freed to process additional camera streams in parallel. This is how we squeezed eight cameras onto one NX 16GB.

Quantization gotchas worth flagging

-

Calibration set quality matters more than quantity. We started with 5,000 random training images for calibration and got mAP 0.881. Curating 800 images that covered all six defect classes and all lighting conditions gave us mAP 0.901. Less is more — but only if it's representative.

-

Per-tensor vs per-channel quantization affects accuracy disproportionately on detection heads. Use per-channel for the final layers; per-tensor everywhere else. TensorRT 8.6+ defaults to this, but verify in your engine config.

-

DLA has constraints. Not every layer type runs on DLA. Conv2d, Pooling, Concat, ReLU work; many custom ops don't. The TensorRT log will tell you which layers fall back to GPU. Plan for the fallback overhead in your latency budget.

-

INT8 model files are not portable across Jetson tiers. The

.enginefile is hardware-specific. We maintain three engine builds (Nano, NX, AGX) and select the correct one in the deployment scripts based on the device tegra ID.

Production gotchas — what dev kits don't tell you

We've shipped Jetson into factories where everything that could go wrong has gone wrong. The list below is the result.

Thermal management

The dev kit fan is not industrial grade. In a NEMA 4 enclosure on a factory floor at 38°C ambient, the stock cooling solution will throttle within 30 minutes. We use:

- 80×80×25mm industrial fan with thermal-controlled PWM (TGY-9015 or equivalent)

- Copper heat pipe transferring heat to enclosure-mounted heatsink

- Thermal pad upgrade between Jetson SoM and chassis

- Closed-loop temperature monitoring via

tegrastatspublished to MQTT — alert if SoC temp >80°C sustained 5+ minutes

Without active monitoring you get silent throttling: model still runs, but inference latency creeps from 22ms to 68ms over hours, eventually breaking your latency budget. We caught this on our second factory deployment — the third deployment shipped with monitoring from day one.

Power supply quality

Factory 24V rails are noisy. Dev kits assume clean lab power. In production:

- Industrial DC-DC converter (24V → 19V) rated for 2× peak Jetson draw

- Inline LC filter for high-frequency noise from VFD-driven motors nearby

- UPS-backed circuit for the Jetson rail (15-minute hold-up minimum) — line restart should not trigger model reload

We had one site where motor inrush from a 30HP press caused Jetson brownouts twice per shift. The brownout would drop the cameras, lose 200ms of frames, and miss two parts. Fixed with a properly sized DC UPS — but it took three weeks to diagnose because Jetson logs only showed the post-reboot side.

Camera time sync

Manufacturing CV needs frame-accurate sync between cameras and PLC events (which part is at which station). Cheap GigE cameras drift by 50-200ms per hour against system clock unless you actively sync. We use:

- PTP (IEEE 1588) hardware-timestamped switch for cameras

- Jetson NTP-disciplined to a factory-floor PTP grandmaster clock

- Frame timestamps captured at hardware decode, not user-space

Without this, your model is making decisions about a part that's already past the reject station. Hard to debug; harder to explain to a plant manager.

Model drift and over-the-air updates

Defect distributions shift over time — new alloy batch, new tooling, seasonal humidity affecting surface oxidation. Models that are 0.91 mAP at deployment can drift to 0.84 mAP in six months. Plan for it:

- Continuous data capture: 1% of frames sampled and uploaded to cloud for review

- Weekly drift monitoring: per-class precision/recall vs baseline

- Quarterly model retrain + A/B deploy via OTA — 10% of cameras get new model first

- Rollback path tested monthly (forced rollback drill)

OTA on Jetson is straightforward but failure-mode-heavy. We use a dual-partition layout (A/B), .engine files versioned alongside Python deployment scripts, atomic switchover with health check, automatic rollback if inference latency exceeds 2× baseline within 60 seconds of switchover.

When cloud still wins for manufacturing CV

Edge isn't always right. The cases where we've kept inference in cloud:

-

Batch quality audits: monthly review of stored video for compliance reporting, anomaly investigation. Not latency-sensitive. Cloud GPU is cheaper than provisioning edge for occasional batch work.

-

Multi-site model training: training data flows from edge to cloud, training happens centrally, models deploy back to edge. This is the "hybrid" Option C above and the right pattern.

-

Latency budgets >500ms with reliable network: e.g., end-of-line inspection where part is held for ~1 second between stations. Network latency doesn't bite. Cloud's elastic scaling handles spikes (new product line ramp-up) better than fixed edge fleet.

-

Ad-hoc analytics over historical footage: "show me every part that had defect type X in the last 60 days." Edge nodes don't store enough video. Cloud + object storage is the right home for this.

What we never do at the edge: model training, hyperparameter sweeps, batch processing of >1 hour of video, or multi-tenant aggregation of more than 32 cameras into one device. Those workloads belong in cloud.

Decision tree: which Jetson tier?

Three questions, in order:

1. How many cameras will share one device?

- 1-4 cameras: Orin Nano 8GB is sufficient if you can use YOLOv8s or smaller. Otherwise NX.

- 5-8 cameras: Orin NX 16GB. Default choice for most manufacturing lines.

- 9-16 cameras: AGX Orin 64GB. NX runs out of budget.

- 17+ cameras: split into multiple devices or use a server-grade GPU edge node (Lenovo SE350 with NVIDIA L4, similar). One Jetson is the wrong topology past 16 cameras.

2. What's the model class?

- Lightweight detection (YOLOv8s, MobileNet-V3 SSD): Orin Nano is viable. Most quality inspection tasks fit here.

- Mid-size detection (YOLOv8m, EfficientDet-D2): NX minimum. Most defect detection workloads.

- Multi-stage pipelines (detect → segment → classify; detect → track → re-ID): AGX Orin. The DLA cores let you parallelize stages without GPU contention.

- Transformer-based vision (DETR, OWL-ViT): AGX Orin only. NX struggles, Nano not viable.

3. What's the latency budget?

- Under 50ms: Edge required. NX or AGX depending on cameras + model.

- 50-150ms: Edge strongly preferred. Network latency to nearest cloud region eats half your budget.

- 150-500ms: Edge or cloud both work. Decide on cost + reliability requirements.

- Over 500ms: Cloud is usually cheaper. Edge only if connectivity is a real risk.

For ~70% of manufacturing CV workloads we've shipped, the answers are: 5-8 cameras, YOLOv8m-class model, sub-100ms budget. Orin NX 16GB.

For ~20% of workloads (multi-stage pipelines or 12+ cameras): AGX Orin.

For ~10% of workloads (constrained budget, simple detection, 1-4 cameras): Orin Nano.

We rarely default to AGX. If you find yourself defaulting to AGX, the smell is usually "we're using the same hardware we picked for development without re-evaluating for production." Re-evaluate.

Migration playbook — moving from cloud inference to Jetson edge

The migration from g5.xlarge to NX edge took us four engineering weeks. The plan:

Week 1: Model export + quantization

- Export PyTorch model to ONNX (opset 17 minimum for YOLOv8 ops compatibility)

- Verify ONNX correctness against PyTorch on a test set (mAP within 0.005)

- Build TensorRT FP16 engine, verify accuracy preservation

- Build TensorRT INT8 engine with PTQ calibration, accept up to 1.5pp mAP drop

- Validate on 2-week historical production traffic offline

Week 2: Edge pipeline development

- Write inference service in C++ or Python (Python is fine if your pipeline is GIL-friendly; we use Python with

tritonclientfor ease) - Hardware decode pipeline using GStreamer with

nvv4l2decoderfor camera streams - Preprocess on GPU using CUDA kernels (avoid CPU-GPU memcopy per frame)

- MQTT publisher with QoS 1 + buffered failover for network blips

- Health check endpoint + Prometheus metrics

Week 3: Hardware bring-up and field test

- Burn-in test for 72 hours at target ambient temperature in test enclosure

- Camera sync verification with PTP

- End-to-end latency measurement with synthetic test pattern

- Deploy to one production line in shadow mode (parallel with cloud, decisions logged not acted on)

Week 4: Cutover

- Compare shadow-mode decisions vs cloud decisions over 5+ shifts (target: agreement >99.5%)

- Switch one line to edge-only, monitor for 48 hours

- Roll out to remaining lines with 24-hour gap between each

- Decommission cloud inference instance (keep training infra)

We've done this migration four times now. Every project hits at least one surprise — a camera firmware quirk, a thermal issue, an edge case in PTP sync. Plan for one week of float in the schedule.

What this article didn't cover

- Multi-modal pipelines: vision + audio (e.g., bearing failure detection combining visual cracks + acoustic signature). Different class of problem; we'll cover separately.

- Synthetic data generation for rare defect classes: how we use Stable Diffusion for augmenting low-frequency defects in training sets. Different topic.

- Comparison with non-NVIDIA edge hardware (Hailo, Coral TPU, RK3588 NPU): we've evaluated these for specific workloads. Short answer: Hailo-8 is competitive with Orin NX on pure vision throughput per watt, but the software ecosystem is significantly less mature, and integration time is 3-4× longer than Jetson. Worth it for power-constrained edge applications (battery-powered, remote sites). Not worth it for industrial wired deployments.

- Multi-tenancy on a single Jetson: running multiple models for different production lines. Doable on AGX with MIG-like partitioning; we've shipped one such deployment. Different article.

If any of these are relevant to your deployment, the contact form gets you a 45-minute conversation with the engineers who built the deployment described above.

Closing

We've shipped Jetson into 14 manufacturing deployments since 2024. The Orin generation (released late 2022) made edge CV genuinely production-grade — the previous Xavier generation forced too many compromises on model class or camera count. The Orin lineup, paired with TensorRT INT8 quantization and DLA acceleration, covers the workload range for ~95% of factory CV applications without the latency, cost, and reliability tradeoffs of cloud inference.

The engineering work is in the integration: thermal, power, time sync, OTA, model drift. None of it is glamorous. All of it determines whether your deployment is in production for five years or replaced after eighteen months.

If you're planning a manufacturing CV deployment in 2026 and the choice between edge tiers is unclear, we offer a 45-minute discovery call — no pitch, just a conversation about your camera count, model class, latency budget, and the engineering work between dev kit and shipping factory floor.